My configuration? A head, two arms and hands, two leggs …

Unknown

Recently I had a conversation about how to evaluate a mobile handheld measurement tool. It could either be used on its own, or together with a mobile device.

Problem being — the student in question wanted to simulate the interaction on a PC.

Either I did misunderstand the whole setup (possible, yet unlikely) or this is a really bad idea. After all, the mobile handheld measurement device should be used, e.g., on construction sites and in stop-and-search operations on the street. Evaluating this kind of scenario on a PC in an office … really bad idea. In practical use, you’d have to handle one or two handheld devices, perhaps even without being able to put them down, and perhaps even dealing with the person you do measure.

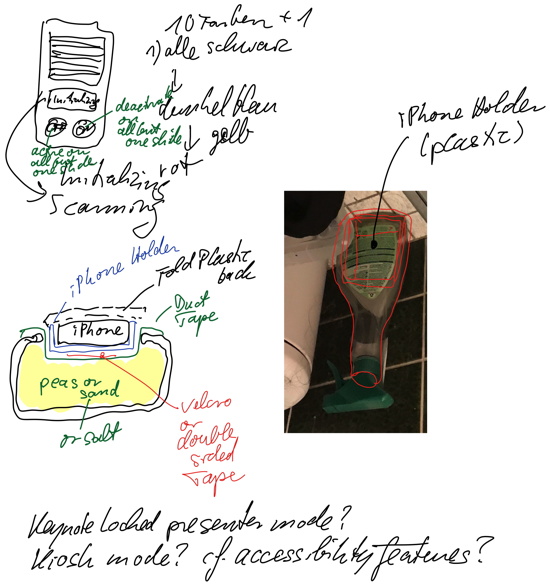

So I suggested just simulating the interaction with mobile devices. Just use the current designs of the mobile handheld measurement device, show them, e.g., on a tablet or on a smartphone. Sure, looks different than the actual hardware that is used, but you could just take one of their devices, gut it, put a small smartphone in it (where the display is) and do it this way.

Just some Wizard-of-Oz — simulate the measurement device via a website (that is displayed correctly formatted on the part of the smartphone screen that is visible through the glass window of the measurement device case). Heck, even using an iOS device with Keynote and simulating the reactions with a Bluetooth remote might work.

Not sure whether the student will implement this solution — I hope so. Why evaluate something that has nothing to do with its later use? The results are (almost) worthless. But a day or two later I thought about it again — wondering whether it could be realized. And yeah, I thought about it in the bathroom when looking at a spray bottle:

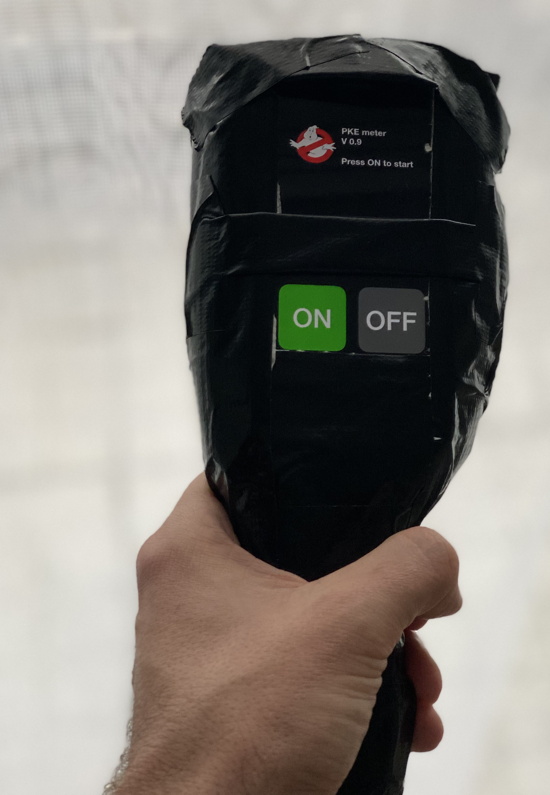

So, I decided to invest 2 or 3 hours and, well, it’s shoddy work, but for a proof-of-concept — it works: The P.K.E. Meter (referring to https://ghostbusters.fandom.com/wiki/P.K.E._Meter):

Or to see it in quick and dirty interaction as a video:

Just an iPhone (5S) with Keynote and a Bluetooth remote (actually, a Bluetooth keyboard, my remote is at work) and some animated gifs and a (badly) looping sound file. And yeah, the Ghostbusters Logo from the first of the two movies. (And the camera is shaking as I was advancing the “slides” with my right toe on the Bluetooth keyboard … yeah, I missed my remote.)

Thing is, four hours more and it would look good. Two days and the hardware casing to play with and it would be indistinguishable from the real thing. Using WebEx you could even transfer what the display is showing to your computer, making it even easier to fake the correct reaction of the system.

And that’s something I don’t understand with a few student projects: This is the fun part. Just try it out.